As a Learning & Development Manager, you face a recurring paradox. You have a small group of highly specialized experts—think mainframe architects, subsea engineers, or forensic accountants—who are critical to your operations. Yet, finding effective training for them feels impossible. The market is flooded with generic courses that promise the world but deliver little relevance to the unique challenges your team tackles daily. The standard advice you receive is to “go custom,” but this suggestion often glosses over the immense difficulty of the task.

The conventional approach to custom content—interviewing a Subject Matter Expert (SME), storyboarding a course, and producing videos—is fundamentally broken for hyper-specialized roles. It treats deep expertise as static information that can be neatly packaged and delivered. This assumption ignores two critical realities: the knowledge is often tacit and hard to articulate, and the tools and processes involved are in a constant state of flux. The result is an expensive, time-consuming project that delivers a course that is already obsolete by the time it launches.

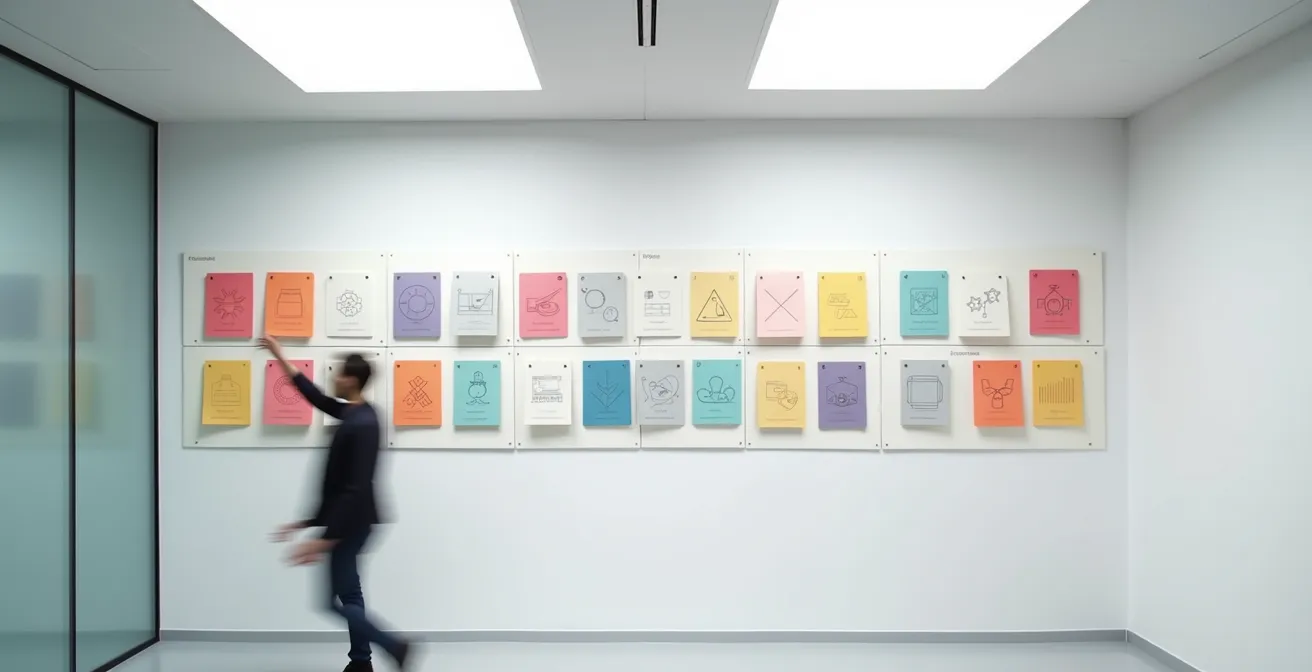

But what if the goal wasn’t to create a perfect, finished “course”? What if, instead, the objective was to build a resilient and evolving knowledge architecture? This article reframes the challenge entirely. We will move beyond the flawed “one-and-done” course model and explore a strategic framework for capturing, structuring, and maintaining niche expertise. This approach prioritizes modularity, targeted formats, and a lifecycle management mindset, ensuring your training investment produces tangible skills, not just shelf-ware.

This guide provides a structured path to developing training that works for your most specialized roles. We will deconstruct the problem, from initial budget considerations to long-term maintenance, offering practical strategies at each stage.

Summary: A Guide to Niche Role Training Architecture

- Why Buying “One-Size-Fits-All” Courses Wastes 60% of Your Budget?

- How to Interview Subject Matter Experts Who Hate Explaining Things?

- Internal Build or Specialized Vendor: Which Guarantees Accuracy?

- The Versioning Error That Makes Your Specialized Course Useless in 6 Months

- How to Patch a Curriculum Without Redoing the Entire Course?

- Generic Off-the-Shelf Library or Custom Build: Which Yields Better ROI?

- Watch or Read: Which Format Solves Technical Blockers Faster?

- How to Keep Your Technical Team Sharp When Tools Change Monthly?

Why Buying “One-Size-Fits-All” Courses Wastes 60% of Your Budget?

The appeal of off-the-shelf training is undeniable: it’s fast, affordable, and seemingly comprehensive. However, for niche roles, this convenience comes at a steep, often hidden, cost. The core issue is a mismatch in relevance. While a generic course on “Advanced Project Management” might cover broad principles, it will completely miss the specific regulatory constraints, proprietary software, and unwritten team protocols that define the reality of a project manager in a pharmaceutical R&D setting. This gap between generic theory and specific application is where your training budget evaporates.

Data from the corporate training world supports this. According to research on personalized learning, many ‘one-size-fits-all’ corporate training programs have high attrition rates and low engagement precisely because employees don’t see the immediate application to their work. If an analysis of a generic course reveals that only 40% of the content is directly applicable to a specialist’s role—a common scenario—then 60% of your per-user license fee is effectively wasted. This isn’t just a financial loss; it’s a loss of employee time and trust in L&D initiatives.

The problem is one of knowledge dilution. Generic courses are designed for the widest possible audience, forcing them to smooth over the very details that make your experts effective. They teach the “what” but completely miss the “how” and “why” within your organization’s unique context. This superficiality fails to build the deep, procedural, and contextual understanding required for high-stakes, specialized work. Ultimately, you’re left supplementing the generic course with internal mentoring and on-the-job training, duplicating effort and negating the initial cost savings.

How to Interview Subject Matter Experts Who Hate Explaining Things?

The success of any custom training hinges on extracting knowledge from your Subject Matter Experts (SMEs). Yet, many L&D professionals hit a wall: the most brilliant experts are often the most difficult to interview. They may be impatient, use impenetrable jargon, or struggle to articulate processes that have become pure “muscle memory.” This isn’t a personal failing; it’s a natural consequence of deep expertise. The key is to shift your approach from a traditional Q&A to a structured process of observation and guided discovery.

As the team at Technical Writer HQ astutely notes, the issue is one of skill translation. In their guide on the role of SMEs, they highlight a critical distinction: “Subject matter experts are experts in their field. However, they might lack the skills required for communicating with non-technical audiences.” Your role, therefore, is not just to ask questions, but to act as a translator. As they point out, this is where a skilled interviewer comes in to learn and understand the topics in question and determine what information is relevant for a broader audience.

Subject matter experts are experts in their field. However, they might lack the skills required for communicating with non-technical audiences. This is where technical writers come in: they conduct detailed subject matter expert interviews to learn and understand the topics in question and determine what information is relevant.

– Technical Writer HQ, What is a Subject Matter Expert (SME)?

Instead of starting with “Can you explain how you do X?”, begin by observing. Use a technique called “Observe, then Inquire.” Ask the SME to perform the task while you watch, either in person or via screen share. You are not just a spectator; you are an active documentarian, sketching out the workflow, noting the tools used, and identifying decision points. This visual map becomes your primary interview tool.

With your workflow sketch in hand, your questions become specific and contextual. Instead of “What’s the next step?”, you can ask, “I see you clicked on this specific module here. What criteria made you choose that option over the other two?” This method respects the SME’s time by focusing on the critical nuances, not the basic steps. It helps them articulate the tacit knowledge—the unconscious decisions and mental models—that they would never think to mention in a standard interview.

Internal Build or Specialized Vendor: Which Guarantees Accuracy?

Once you’ve committed to custom training, the next major decision is whether to build it in-house or hire a specialized vendor. There’s no single right answer; the choice depends on your priorities regarding accuracy, context, cost, and control. Neither path is an inherent guarantee of quality, but each offers a different type of accuracy.

An internal build, led by your L&D team in close collaboration with in-house SMEs, excels at achieving contextual fidelity. Your internal team understands the company culture, the unwritten rules, and the specific jargon that a vendor could never fully grasp. They can embed the training with real-world scenarios and internal process details that make it immediately relevant to employees. However, this path often requires a higher initial investment in terms of time and resources, and your capacity to scale content creation is limited by your team’s size.

A specialized vendor, on the other hand, provides factual and instructional accuracy. They bring expertise in instructional design, media production, and learning technologies that your internal team may lack. They can build polished, engaging, and technically sound training modules more quickly by leveraging existing frameworks. The trade-off is often a loss of nuanced context. While the information will be correct, it may feel generic and miss the specific “flavor” of how your company operates. Furthermore, you may have limited control over the intellectual property (IP) and face delays when updates are needed.

This decision can be best understood through a direct comparison of the key factors involved.

| Criteria | Internal Build | Specialized Vendor |

|---|---|---|

| Contextual Fidelity | Excellent – includes unwritten rules, company jargon, cultural nuances | Good – achieves factual accuracy but may miss context |

| Initial Cost | Higher – requires internal resources and time | Lower – leverages vendor’s existing frameworks |

| IP Control | Full ownership and control | Limited – vendor may retain IP rights |

| Update Speed | Fast – can update at business speed | Slower – depends on vendor availability |

| Scalability | Limited by internal resources | High – vendor handles infrastructure |

The Versioning Error That Makes Your Specialized Course Useless in 6 Months

The single greatest threat to niche technical training is content volatility. The tools, processes, and best practices for specialized roles change constantly. A monolithic, 90-minute video course on a specific software workflow can become obsolete the day a critical UI update is released. The “versioning error” is not a technical glitch; it’s a strategic failure to design for change. Treating your course as a static, finished product is a recipe for creating expensive, disposable training.

The solution is to abandon the monolithic model and embrace a modular knowledge architecture. Instead of one long course, you build a system of small, independent, and interconnected content blocks. A “course” then becomes a playlist or pathway through these modules. For example, a training on a data analysis tool could be broken down into modules for “Data Import,” “Cleaning Functions,” “Visualization Setup,” and “Reporting.” When the visualization interface changes, you only need to update or replace that single, small module, not re-shoot the entire program. This approach dramatically reduces maintenance costs and ensures the curriculum remains current.

Building this architecture requires a disciplined approach to content management from day one. It means establishing clear ownership and a proactive system for tracking changes. Without a robust versioning strategy, even a modular system can descend into chaos. A clear plan ensures that learners always access the most current information and that updates are handled efficiently, rather than in a state of panic after a critical process breaks.

To prevent your training from becoming obsolete, you must build a system designed for evolution. The following checklist outlines the essential practices for maintaining a living curriculum.

Action Plan: Implementing a Future-Proof Versioning System

- Adopt Semantic Versioning: Implement a clear naming convention for your content modules (e.g., v1.0 for major rewrites, v1.1 for minor updates, v1.1.1 for typos) to track changes systematically.

- Create a Public Changelog: Maintain a simple, accessible document that communicates the scope and date of all content updates, so learners and stakeholders know what’s new.

- Design Modular Blocks: Structure every piece of content—be it a video, a PDF, or a quiz—as a self-contained block that can be updated or replaced independently of the others.

- Establish a Content Owner: Assign a specific person (separate from the SME) the formal responsibility for monitoring for process changes and triggering the update workflow.

- Use Branching for Remediation: In interactive content, use branching logic to direct learners who struggle with a concept to supplemental materials, creating personalized paths without altering the core module.

How to Patch a Curriculum Without Redoing the Entire Course?

Once you have a modular architecture, the question becomes how to “patch” the curriculum efficiently when a small part becomes outdated. Re-shooting a full video or rewriting a large manual for a minor change is not feasible. The answer lies in leveraging microlearning and targeted, interactive formats that can be deployed quickly as surgical updates.

The core principle is to match the patch format to the nature of the update. If a single step in a 10-step software process has changed, you don’t need a new 10-minute video. A 45-second screen recording showing only the new step, accompanied by a brief text explanation, is far more effective. This respects the learner’s time and cognitive load. After all, corporate training research confirms that engagement plummets with length; studies show that nearly two-thirds of people won’t watch videos over 20 minutes long, and for technical updates, that tolerance is even lower.

A powerful technique for patching is the use of interactive video overlays or “hotspots.” Instead of re-editing an existing video, you can add a non-destructive layer on top of it. For instance, if a button in a software demo has moved, you can add a pop-up text box or a clickable icon at the appropriate timestamp that says, “As of version 3.2, this button is now located in the top-right menu.” This preserves the original asset while providing a just-in-time correction. Companies like Brightcove have built entire platforms around this concept, allowing instructional designers to embed forms, polls, and updates directly within video content, making training a dynamic, two-way conversation.

This “patching” mindset extends to all content. A change in a compliance code doesn’t require a new module; it can be addressed with an updated, one-page PDF linked from the original content. A new best practice can be shared via a short, peer-recorded audio clip. By thinking of updates as small, targeted interventions rather than major overhauls, you create a truly agile and resilient learning ecosystem.

Generic Off-the-Shelf Library or Custom Build: Which Yields Better ROI?

When presenting a budget, L&D Managers must justify the high upfront cost of a custom build versus the low per-user price of a generic library. The key is to frame the discussion around long-term Return on Investment (ROI), not just initial outlay. While a generic course might cost $150 per user, its ROI is severely limited if only a fraction of it is applicable. True ROI comes from targeted performance improvement, which is the domain of custom-built content.

A custom build is a capital investment in your team’s specific capabilities. It is designed to solve your organization’s unique problems, reduce specific error rates, and align with your exact workflows. This high degree of relevance directly impacts performance KPIs. For example, a custom training for a niche sales role might focus on handling objections related to your three main competitors, a topic no generic course would cover. The resulting increase in the win rate for those specific scenarios provides a measurable and direct return.

Furthermore, custom training sends a powerful message to employees: the company is investing in their specific professional development. This has a significant, though harder to quantify, impact on employee retention and engagement, especially among top-performing specialists who crave mastery. A generic library, by contrast, can feel impersonal and demonstrates a lack of understanding of their unique needs. The long-term cost of replacing a departed specialist far exceeds the initial savings of an off-the-shelf training solution.

The financial and strategic trade-offs become crystal clear when laid out in a comparative analysis.

| Metric | Generic Off-the-Shelf | Custom Build |

|---|---|---|

| Initial Investment | Low ($50-200 per user) | High ($5,000-50,000+ total) |

| Time to Deployment | Immediate | 4-12 weeks |

| Relevance to Role | 40-60% applicable | 95-100% applicable |

| Employee Retention Impact | Minimal | Significant – shows investment in development |

| Performance KPI Impact | Generic improvements | Targeted improvements in specific metrics |

| Long-term ROI | Low – requires supplemental training | High – reduces errors and training time |

Watch or Read: Which Format Solves Technical Blockers Faster?

The choice between video and text in technical training is not a matter of learner preference; it’s a strategic decision based on the cognitive task at hand. Selecting the wrong format for the job can create friction and hinder learning, while the right format can unblock a technical challenge in seconds. The guiding principle should be “format-function fit.”

Video excels at demonstrating complex, dynamic, or spatial processes. When you need to show how to assemble a piece of hardware, navigate a multi-step visual interface, or perform a physical technique, video is unparalleled. It conveys flow, timing, and motion in a way that static text and images cannot. As training experts from companies like Google have shown, using videos of real people in real situations, such as security engineers walking through their process, makes complex technical roles feel more accessible and human. These “day-in-the-life” videos are excellent for onboarding and conceptual understanding.

Text, on the other hand, is superior for tasks that require precision, reference, and direct application. No developer wants to pause a video to copy a line of code. For procedural tasks involving code snippets, API keys, or specific commands, a well-formatted text document or cheat sheet is infinitely faster and more accurate. Text is scannable, searchable, and allows for easy copy-pasting, minimizing errors. This is why searchable transcripts should be a mandatory companion to all technical training videos; they provide a “best of both worlds” solution, allowing for quick reference after a concept has been visually demonstrated.

The optimal training architecture uses a hybrid approach, where each format is deployed for its specific strengths. A long video should be broken down into chapters with time-stamped navigation, and any code or commands mentioned should be provided in a separate, easily accessible text file. This thoughtful integration of formats is the hallmark of effective technical instruction.

Key Takeaways

- Generic courses fail for niche roles due to a “relevance gap,” effectively wasting over half of the training budget on inapplicable content.

- Adopt a “knowledge architecture” mindset: build modular, independent content blocks that can be updated individually to combat content volatility.

- Master expertise extraction by observing SMEs first and using specific, contextual questions to uncover their tacit knowledge.

- Select the training format (video vs. text) based on the cognitive task, not just learner preference, to solve technical blockers faster.

How to Keep Your Technical Team Sharp When Tools Change Monthly?

For technical teams working on the cutting edge, the challenge isn’t a one-time training event; it’s creating a culture and a system of continuous learning. When tools and platforms can have significant updates monthly, a static training library is insufficient. The goal is to build an ecosystem that facilitates rapid knowledge dissemination and empowers the team to learn from each other. This shifts the L&D function from being a “content creator” to a “learning facilitator.”

This is especially difficult because the very act of formalizing knowledge is unnatural for many experts. Harvard research highlights this challenge, revealing that as many as 82% of people struggle to codify their own experiences and insights. Relying solely on formal courses will always leave you behind the curve. A more agile approach involves creating channels for informal, just-in-time knowledge sharing. For example, you can institute peer-taught “demo days” where team members who have mastered a new feature present it to the rest of the group. This is faster and often more relevant than a polished, externally produced course.

Another powerful strategy is to create a curated internal feed or newsletter that summarizes critical changelogs and links to expert tutorials. This reduces the cognitive load on the team, who no longer have to sift through mountains of documentation themselves. You can also gamify the process by establishing a “solution bounty” system: when a team member documents a workaround for a new bug or a clever use of a new feature, they receive recognition or a small reward. This incentivizes the proactive documentation that is crucial for keeping collective knowledge current.

Ultimately, keeping a technical team sharp is about fostering a dynamic learning environment. It involves a blend of structured onboarding to bring new members up to speed, microlearning videos for specific skills, and, most importantly, robust channels for peer-to-peer knowledge exchange. It is a living system, not a static library.

To truly transform your approach, begin by auditing your current training for a single niche role. Map out its existing content, identify the most volatile components, and start a conversation with your SME not about a “course,” but about building a shared, living knowledge base. This shift in perspective is the first and most critical step toward building training that truly empowers your experts.